TL;DR: Training will remain centralized. The real debate is who owns inference, the layer where AI interacts with the physical world. If the mobile shift created iOS and Android, the edge AI shift will create a new orchestration layer for distributed models. That category still lacks a clear winner.

The Edge

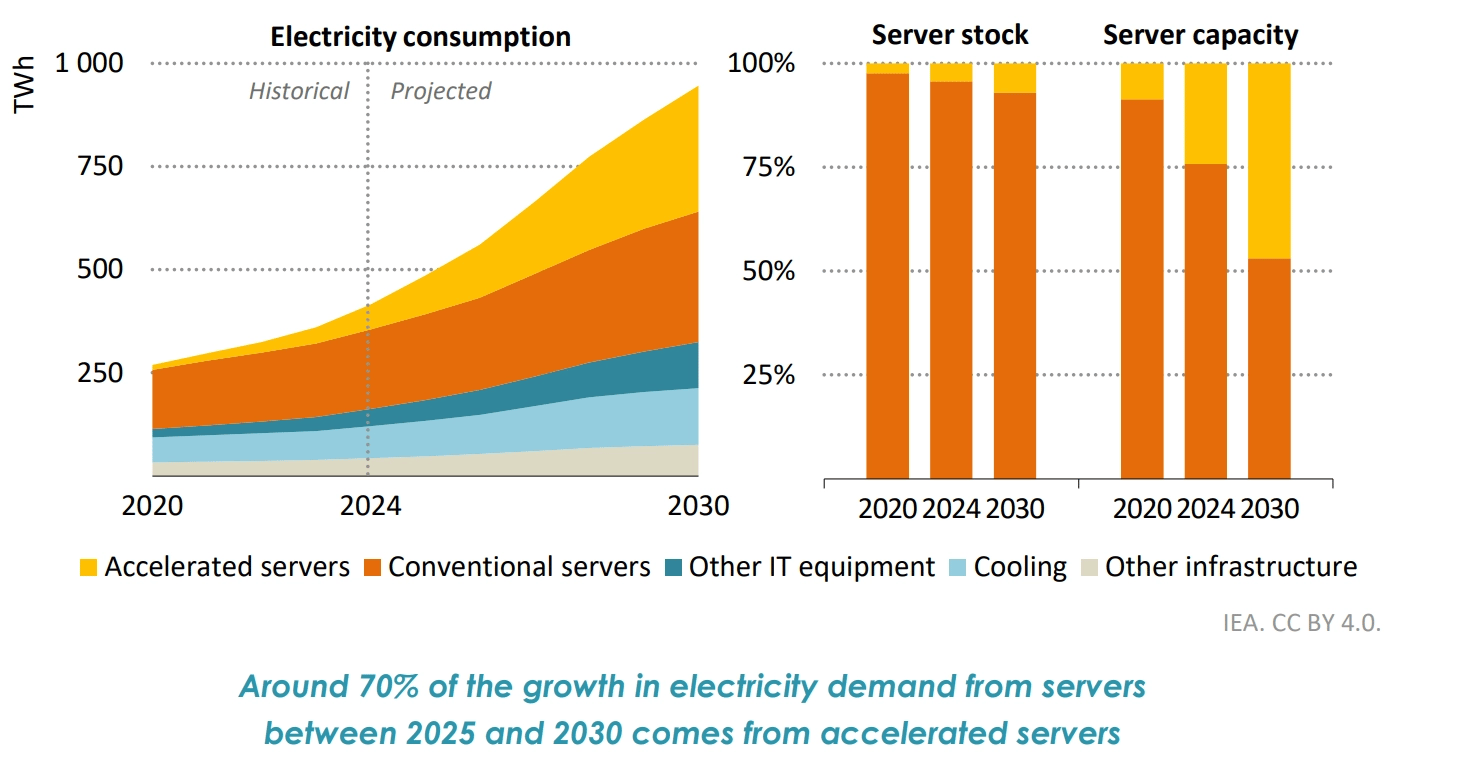

For the past few years, the commentary around AI infrastructure has revolved around its extensive build-outs and the energy demands of data centers. The narrative is now shifting to efficiency, as we’re seeing pressure on operational capacity because larger models functinoally require more compute. AI workloads currently consume 10-20% of data center power, projecting to be 35-50% by 2030, posing political and cost challenges for anyone seeking to perform inference at scale.

There are also structural constraints like latency, data privacy, and the economics of paying a hyperscaler. While the economics can work for consumer apps due to less usage, enterprises that run latency-sensitive decisions thousands of times per minute often struggle with the economics and latency of cloud-based inference.

Big Tech is already voting with its silicon

Meta's newly unveiled MTIA 300 chip is purpose-built for inference, not training, and its broader chip roadmap through 2027 is explicitly designed to run AI workloads internally rather than depend on general-purpose GPUs. NVIDIA is making similar moves, partnering on high-fidelity digital twin technology that simulates millions of miles of autonomous vehicle edge-case scenarios locally rather than routing data back to the cloud.

The industrial side is where the thesis gets even more concrete, with companies like Mind Robotics, spun out of Rivian, are built entirely around training on live factory data and running inference inside the factory. The data flywheel only works if it's grounded in a real manufacturing environment (built to essentially avoid a cloud use case), and on-device inference at that scale significantly reduces the cost and energy consumption of each inference compared to routing every request through the cloud.

Where Value Accrues

You can see a clear parallel to what we all watched play out in mobile. When compute shifted from desktop to pocket, it didn't just create new device companies; it created entirely new layers of the stack: operating systems, app stores, developer tools, and vertically integrated platforms that hadn't existed before.

Edge AI is likely to follow a similar pattern. The hardware winners are becoming increasingly visible (purpose-built ASICs and application-specific inference chips). The software and deployment layer is still wide open.

Several players are already staking out this territory, including NVIDIA Fleet Command and AWS IoT Greengrass for enterprise deployments, KubeEdge for distributed orchestration, Edge Impulse for embedded and IoT-specific ML, and Hugging Face Optimum paired with ONNX Runtime for efficient model inference. No clear orchestration winner has emerged yet, which is exactly what makes this layer interesting.

The most valuable layer may not be the chips themselves but the orchestration layer that manages deployment, updates, security, and monitoring of models running across thousands of distributed devices. In the cloud era, that layer became platforms like AWS and Kubernetes. The edge AI equivalent has not yet emerged.

For industrial verticals specifically, the combination of proprietary training data, domain-specific models, and on-premise deployment is a stack that looks very different from anything the last wave of SaaS produced.

Takeaway: Training will remain centralized. The real debate is who owns inference, the layer where AI interacts with the physical world. If the mobile shift created iOS and Android, the edge AI shift will create a new orchestration layer for distributed models. That category still lacks a clear winner.

Have a great weekend,

Josh